title stringlengths 1 290 | body stringlengths 0 228k ⌀ | html_url stringlengths 46 51 | comments list | pull_request dict | number int64 1 5.59k | is_pull_request bool 2

classes |

|---|---|---|---|---|---|---|

[Cmrc 2018] fix cmrc2018 | https://github.com/huggingface/datasets/pull/99 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/99",

"html_url": "https://github.com/huggingface/datasets/pull/99",

"diff_url": "https://github.com/huggingface/datasets/pull/99.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/99.patch",

"merged_at": "2020-05-14T08:49:41"

} | 99 | true | |

Webis tl-dr | Add the Webid TL:DR dataset. | https://github.com/huggingface/datasets/pull/98 | [

"Should that rather be in an organization scope, @thomwolf @patrickvonplaten ?",

"> Should that rather be in an organization scope, @thomwolf @patrickvonplaten ?\r\n\r\nI'm a bit indifferent - both would be fine for me!",

"@jplu - if creating the dummy_data is too tedious, I can do it as well :-) ",

"There is... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/98",

"html_url": "https://github.com/huggingface/datasets/pull/98",

"diff_url": "https://github.com/huggingface/datasets/pull/98.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/98.patch",

"merged_at": "2020-05-14T20:54:15"

} | 98 | true |

[Csv] add tests for csv dataset script | Adds dummy data tests for csv. | https://github.com/huggingface/datasets/pull/97 | [

"@thomwolf - can you check and merge if ok? "

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/97",

"html_url": "https://github.com/huggingface/datasets/pull/97",

"diff_url": "https://github.com/huggingface/datasets/pull/97.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/97.patch",

"merged_at": "2020-05-13T23:23:15"

} | 97 | true |

lm1b | Add lm1b dataset. | https://github.com/huggingface/datasets/pull/96 | [

"I might have a different version of `isort` than others. It seems like I'm always reordering the imports of others. But isn't really a problem..."

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/96",

"html_url": "https://github.com/huggingface/datasets/pull/96",

"diff_url": "https://github.com/huggingface/datasets/pull/96.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/96.patch",

"merged_at": "2020-05-14T14:13:29"

} | 96 | true |

Replace checksums files by Dataset infos json | ### Better verifications when loading a dataset

I replaced the `urls_checksums` directory that used to contain `checksums.txt` and `cached_sizes.txt`, by a single file `dataset_infos.json`. It's just a dict `config_name` -> `DatasetInfo`.

It simplifies and improves how verifications of checksums and splits sizes ... | https://github.com/huggingface/datasets/pull/95 | [

"Great! LGTM :-) ",

"> Ok, really clean!\r\n> I like the logic (not a huge fan of using `_asdict_inner` but it makes sense).\r\n> I think it's a nice improvement!\r\n> \r\n> How should we update the files in the repo? Run a big job on a server or on somebody's computer who has most of the datasets already downloa... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/95",

"html_url": "https://github.com/huggingface/datasets/pull/95",

"diff_url": "https://github.com/huggingface/datasets/pull/95.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/95.patch",

"merged_at": "2020-05-14T08:58:42"

} | 95 | true |

Librispeech | Add librispeech dataset and remove some useless content. | https://github.com/huggingface/datasets/pull/94 | [

"@jplu - I changed this weird archieve - iter method to something simpler. It's only one file to download anyways so I don't see the point of using weird iter methods...It's a huge file though :D 30 million lines of text. Took me quite some time to download :D "

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/94",

"html_url": "https://github.com/huggingface/datasets/pull/94",

"diff_url": "https://github.com/huggingface/datasets/pull/94.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/94.patch",

"merged_at": "2020-05-13T21:29:02"

} | 94 | true |

Cleanup notebooks and various fixes | Fixes on dataset (more flexible) metrics (fix) and general clean ups | https://github.com/huggingface/datasets/pull/93 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/93",

"html_url": "https://github.com/huggingface/datasets/pull/93",

"diff_url": "https://github.com/huggingface/datasets/pull/93.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/93.patch",

"merged_at": "2020-05-13T15:01:47"

} | 93 | true |

[WIP] add wmt14 | WMT14 takes forever to download :-/

- WMT is the first dataset that uses an abstract class IMO, so I had to modify the `load_dataset_module` a bit. | https://github.com/huggingface/datasets/pull/92 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/92",

"html_url": "https://github.com/huggingface/datasets/pull/92",

"diff_url": "https://github.com/huggingface/datasets/pull/92.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/92.patch",

"merged_at": "2020-05-16T11:17:37"

} | 92 | true |

[Paracrawl] add paracrawl | - Huge dataset - took ~1h to download

- Also this PR reformats all dataset scripts and adds `datasets` to `make style` | https://github.com/huggingface/datasets/pull/91 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/91",

"html_url": "https://github.com/huggingface/datasets/pull/91",

"diff_url": "https://github.com/huggingface/datasets/pull/91.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/91.patch",

"merged_at": "2020-05-13T10:40:14"

} | 91 | true |

Add download gg drive | We can now add datasets that download from google drive | https://github.com/huggingface/datasets/pull/90 | [

"awesome - so no manual downloaded needed here? ",

"Yes exactly. It works like a standard download"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/90",

"html_url": "https://github.com/huggingface/datasets/pull/90",

"diff_url": "https://github.com/huggingface/datasets/pull/90.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/90.patch",

"merged_at": "2020-05-13T10:05:31"

} | 90 | true |

Add list and inspect methods - cleanup hf_api | Add a bunch of methods to easily list and inspect the processing scripts up-loaded on S3:

```python

nlp.list_datasets()

nlp.list_metrics()

# Copy and prepare the scripts at `local_path` for easy inspection/modification.

nlp.inspect_dataset(path, local_path)

# Copy and prepare the scripts at `local_path` for easy... | https://github.com/huggingface/datasets/pull/89 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/89",

"html_url": "https://github.com/huggingface/datasets/pull/89",

"diff_url": "https://github.com/huggingface/datasets/pull/89.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/89.patch",

"merged_at": "2020-05-13T09:33:10"

} | 89 | true |

Add wiki40b | This one is a beam dataset that downloads files using tensorflow.

I tested it on a small config and it works fine | https://github.com/huggingface/datasets/pull/88 | [

"Looks good to me. I have not really looked too much into the Beam Datasets yet though - so I think you can merge whenever you think is good for Beam datasets :-) "

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/88",

"html_url": "https://github.com/huggingface/datasets/pull/88",

"diff_url": "https://github.com/huggingface/datasets/pull/88.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/88.patch",

"merged_at": "2020-05-13T12:31:54"

} | 88 | true |

Add Flores | Beautiful language for sure! | https://github.com/huggingface/datasets/pull/87 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/87",

"html_url": "https://github.com/huggingface/datasets/pull/87",

"diff_url": "https://github.com/huggingface/datasets/pull/87.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/87.patch",

"merged_at": "2020-05-13T09:23:33"

} | 87 | true |

[Load => load_dataset] change naming | Rename leftovers @thomwolf | https://github.com/huggingface/datasets/pull/86 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/86",

"html_url": "https://github.com/huggingface/datasets/pull/86",

"diff_url": "https://github.com/huggingface/datasets/pull/86.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/86.patch",

"merged_at": "2020-05-13T08:50:57"

} | 86 | true |

Add boolq | I just added the dummy data for this dataset.

This one was uses `tf.io.gfile.copy` to download the data but I added the support for custom download in the mock_download_manager. I also had to add a `tensorflow` dependency for tests. | https://github.com/huggingface/datasets/pull/85 | [

"Awesome :-) Thanks for adding the function to the Mock DL Manager"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/85",

"html_url": "https://github.com/huggingface/datasets/pull/85",

"diff_url": "https://github.com/huggingface/datasets/pull/85.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/85.patch",

"merged_at": "2020-05-13T09:09:38"

} | 85 | true |

[TedHrLr] add left dummy data | https://github.com/huggingface/datasets/pull/84 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/84",

"html_url": "https://github.com/huggingface/datasets/pull/84",

"diff_url": "https://github.com/huggingface/datasets/pull/84.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/84.patch",

"merged_at": "2020-05-13T08:29:21"

} | 84 | true | |

New datasets | https://github.com/huggingface/datasets/pull/83 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/83",

"html_url": "https://github.com/huggingface/datasets/pull/83",

"diff_url": "https://github.com/huggingface/datasets/pull/83.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/83.patch",

"merged_at": "2020-05-12T18:22:45"

} | 83 | true | |

[Datasets] add ted_hrlr | @thomwolf - After looking at `xnli` I think it's better to leave the translation features and add a `translation` key to make them work in our framework.

The result looks like this:

2. GLEU: Google-BLEU: https://github.com/cnap/gec-... | https://github.com/huggingface/datasets/pull/75 | [

"It's all about my metric stuff so I'll probably merge it unless you want to have a look.\r\n\r\nTook the occasion to remove the old doc and requirements.txt"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/75",

"html_url": "https://github.com/huggingface/datasets/pull/75",

"diff_url": "https://github.com/huggingface/datasets/pull/75.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/75.patch",

"merged_at": "2020-05-13T07:44:10"

} | 75 | true |

fix overflow check | I did some tests and unfortunately the test

```

pa_array.nbytes > MAX_BATCH_BYTES

```

doesn't work. Indeed for a StructArray, `nbytes` can be less 2GB even if there is an overflow (it loops...).

I don't think we can do a proper overflow test for the limit of 2GB...

For now I replaced it with a sanity check on... | https://github.com/huggingface/datasets/pull/74 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/74",

"html_url": "https://github.com/huggingface/datasets/pull/74",

"diff_url": "https://github.com/huggingface/datasets/pull/74.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/74.patch",

"merged_at": "2020-05-12T10:04:37"

} | 74 | true |

JSON script | Add a JSONS script to read JSON datasets from files. | https://github.com/huggingface/datasets/pull/73 | [

"The tests for the Wikipedia dataset do not pass anymore with the error:\r\n```\r\nTo be able to use this dataset, you need to install the following dependencies ['mwparserfromhell'] using 'pip install mwparserfromhell' for instance'\r\n```",

"This was an issue on master. You can just rebase from master.",

"Per... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/73",

"html_url": "https://github.com/huggingface/datasets/pull/73",

"diff_url": "https://github.com/huggingface/datasets/pull/73.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/73.patch",

"merged_at": "2020-05-18T06:50:36"

} | 73 | true |

[README dummy data tests] README to better understand how the dummy data structure works | In this PR a README.md is added to tests to shine more light on how the dummy data structure works. I try to explain the different possible cases. IMO the best way to understand the logic is to checkout the dummy data structure of the different datasets I mention in the README.md since those are the "edge cases".

@... | https://github.com/huggingface/datasets/pull/72 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/72",

"html_url": "https://github.com/huggingface/datasets/pull/72",

"diff_url": "https://github.com/huggingface/datasets/pull/72.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/72.patch",

"merged_at": "2020-05-11T22:26:01"

} | 72 | true |

Fix arrow writer for big datasets using writer_batch_size | This PR fixes Yacine's bug.

According to [this](https://github.com/apache/arrow/blob/master/docs/source/cpp/arrays.rst#size-limitations-and-recommendations), it is not recommended to have pyarrow arrays bigger than 2Go.

Therefore I set a default batch size of 100 000 examples per batch. In general it shouldn't exce... | https://github.com/huggingface/datasets/pull/71 | [

"After a quick chat with Yacine : the 2Go test may not be sufficient actually, as I'm looking at the size of the array and not the size of the current_rows. If the test doesn't do the job I think I'll remove it and lower the batch size a bit to be sure that it never exceeds 2Go. I'll do more tests later"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/71",

"html_url": "https://github.com/huggingface/datasets/pull/71",

"diff_url": "https://github.com/huggingface/datasets/pull/71.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/71.patch",

"merged_at": "2020-05-11T20:00:38"

} | 71 | true |

adding RACE, QASC, Super_glue and Tiny_shakespear datasets | https://github.com/huggingface/datasets/pull/70 | [

"I think rebasing to master will solve the quality test and the datasets that don't have a testing structure yet because of the manual download - maybe you can put them in `datasets under construction`? Then would also make it easier for me to see how to add tests for them :-) "

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/70",

"html_url": "https://github.com/huggingface/datasets/pull/70",

"diff_url": "https://github.com/huggingface/datasets/pull/70.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/70.patch",

"merged_at": "2020-05-12T13:21:51"

} | 70 | true | |

fix cache dir in builder tests | minor fix | https://github.com/huggingface/datasets/pull/69 | [

"Nice, is that the reason one cannot rerun the tests without deleting the cache? \r\n",

"Yes exactly. It was not using the temporary dir for tests."

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/69",

"html_url": "https://github.com/huggingface/datasets/pull/69",

"diff_url": "https://github.com/huggingface/datasets/pull/69.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/69.patch",

"merged_at": "2020-05-11T07:19:28"

} | 69 | true |

[CSV] re-add csv | Re-adding csv under the datasets under construction to keep circle ci happy - will have to see how to include it in the tests.

@lhoestq noticed that I accidently deleted it in https://github.com/huggingface/nlp/pull/63#discussion_r422263729. | https://github.com/huggingface/datasets/pull/68 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/68",

"html_url": "https://github.com/huggingface/datasets/pull/68",

"diff_url": "https://github.com/huggingface/datasets/pull/68.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/68.patch",

"merged_at": "2020-05-08T17:40:46"

} | 68 | true |

[Tests] Test files locally | This PR adds a `aws` and a `local` decorator to the tests so that tests now run on the local datasets.

By default, the `aws` is deactivated and `local` is activated and `slow` is deactivated, so that only 1 test per dataset runs on circle ci.

**When local is activated all folders in `./datasets` are tested.**

... | https://github.com/huggingface/datasets/pull/67 | [

"Super nice, good job @patrickvonplaten!"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/67",

"html_url": "https://github.com/huggingface/datasets/pull/67",

"diff_url": "https://github.com/huggingface/datasets/pull/67.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/67.patch",

"merged_at": "2020-05-08T15:17:00"

} | 67 | true |

[Datasets] ReadME | https://github.com/huggingface/datasets/pull/66 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/66",

"html_url": "https://github.com/huggingface/datasets/pull/66",

"diff_url": "https://github.com/huggingface/datasets/pull/66.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/66.patch",

"merged_at": "2020-05-08T13:39:22"

} | 66 | true | |

fix math dataset and xcopa | - fixes math dataset and xcopa, uploaded both of the to S3 | https://github.com/huggingface/datasets/pull/65 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/65",

"html_url": "https://github.com/huggingface/datasets/pull/65",

"diff_url": "https://github.com/huggingface/datasets/pull/65.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/65.patch",

"merged_at": "2020-05-08T13:35:40"

} | 65 | true |

[Datasets] Make master ready for datasets adding | Add all relevant files so that datasets can now be added on master | https://github.com/huggingface/datasets/pull/64 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/64",

"html_url": "https://github.com/huggingface/datasets/pull/64",

"diff_url": "https://github.com/huggingface/datasets/pull/64.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/64.patch",

"merged_at": "2020-05-08T13:17:30"

} | 64 | true |

[Dataset scripts] add all datasets scripts | As mentioned, we can have the canonical datasets in the master. For now I also want to include all the data as present on S3 to make the synchronization easier when uploading new datastes.

@mariamabarham @lhoestq @thomwolf - what do you think?

If this is ok for you, I can sync up the master with the `add_datase... | https://github.com/huggingface/datasets/pull/63 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/63",

"html_url": "https://github.com/huggingface/datasets/pull/63",

"diff_url": "https://github.com/huggingface/datasets/pull/63.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/63.patch",

"merged_at": "2020-05-08T11:34:00"

} | 63 | true |

[Cached Path] Better error message | IMO returning `None` in this function only leads to confusion and is never helpful. | https://github.com/huggingface/datasets/pull/62 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/62",

"html_url": "https://github.com/huggingface/datasets/pull/62",

"diff_url": "https://github.com/huggingface/datasets/pull/62.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/62.patch",

"merged_at": null

} | 62 | true |

[Load] rename setup_module to prepare_module | rename setup_module to prepare_module due to issues with pytests `setup_module` function.

See: PR #59. | https://github.com/huggingface/datasets/pull/61 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/61",

"html_url": "https://github.com/huggingface/datasets/pull/61",

"diff_url": "https://github.com/huggingface/datasets/pull/61.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/61.patch",

"merged_at": "2020-05-08T08:56:16"

} | 61 | true |

Update to simplify some datasets conversion | This PR updates the encoding of `Values` like `integers`, `boolean` and `float` to use python casting and avoid having to cast in the dataset scripts, as mentioned here: https://github.com/huggingface/nlp/pull/37#discussion_r420176626

We could also change (not included in this PR yet):

- `supervized_keys` to make t... | https://github.com/huggingface/datasets/pull/60 | [

"Awesome! ",

"Also we should convert `tf.io.gfile.exists` into `os.path.exists` , `tf.io.gfile.listdir`into `os.listdir` and `tf.io.gfile.glob` into `glob.glob` (will need to add `import glob`)",

"> Also we should convert `tf.io.gfile.exists` into `os.path.exists` , `tf.io.gfile.listdir`into `os.listdir` and `... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/60",

"html_url": "https://github.com/huggingface/datasets/pull/60",

"diff_url": "https://github.com/huggingface/datasets/pull/60.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/60.patch",

"merged_at": "2020-05-08T10:18:24"

} | 60 | true |

Fix tests | @patrickvonplaten I've broken a bit the tests with #25 while simplifying and re-organizing the `load.py` and `download_manager.py` scripts.

I'm trying to fix them here but I have a weird error, do you think you can have a look?

```bash

(datasets) MacBook-Pro-de-Thomas:datasets thomwolf$ python -m pytest -sv ./test... | https://github.com/huggingface/datasets/pull/59 | [

"I can fix the tests tomorrow :-) ",

"Very weird bug indeed! I think the problem was that when importing `setup_module` we overwrote `pytest's` setup_module function. I think this is the relevant code in pytest: https://github.com/pytest-dev/pytest/blob/9d2eabb397b059b75b746259daeb20ee5588f559/src/_pytest/python.... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/59",

"html_url": "https://github.com/huggingface/datasets/pull/59",

"diff_url": "https://github.com/huggingface/datasets/pull/59.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/59.patch",

"merged_at": "2020-05-08T10:46:51"

} | 59 | true |

Aborted PR - Fix tests | @patrickvonplaten I've broken a bit the tests with #25 while simplifying and re-organizing the `load.py` and `download_manager.py` scripts.

I'm trying to fix them here but I have a weird error, do you think you can have a look?

```bash

(datasets) MacBook-Pro-de-Thomas:datasets thomwolf$ python -m pytest -sv ./test... | https://github.com/huggingface/datasets/pull/58 | [

"Wait I messed up my branch, let me clean this."

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/58",

"html_url": "https://github.com/huggingface/datasets/pull/58",

"diff_url": "https://github.com/huggingface/datasets/pull/58.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/58.patch",

"merged_at": null

} | 58 | true |

Better cached path | ### Changes:

- The `cached_path` no longer returns None if the file is missing/the url doesn't work. Instead, it can raise `FileNotFoundError` (missing file), `ConnectionError` (no cache and unreachable url) or `ValueError` (parsing error)

- Fix requests to firebase API that doesn't handle HEAD requests...

- Allow c... | https://github.com/huggingface/datasets/pull/57 | [

"I should have read this PR before doing my own: https://github.com/huggingface/nlp/pull/62 :D \r\nwill close mine. Looks great :-) ",

"> Awesome, this is really nice!\r\n> \r\n> By the way, we should improve the `cached_path` method of the `transformers` repo similarly, don't you think (@patrickvonplaten in part... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/57",

"html_url": "https://github.com/huggingface/datasets/pull/57",

"diff_url": "https://github.com/huggingface/datasets/pull/57.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/57.patch",

"merged_at": "2020-05-08T13:20:28"

} | 57 | true |

[Dataset] Tester add mock function | need to add an empty `extract()` function to make `hansard` dataset test work. | https://github.com/huggingface/datasets/pull/56 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/56",

"html_url": "https://github.com/huggingface/datasets/pull/56",

"diff_url": "https://github.com/huggingface/datasets/pull/56.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/56.patch",

"merged_at": "2020-05-07T17:52:50"

} | 56 | true |

Beam datasets | # Beam datasets

## Intro

Beam Datasets are using beam pipelines for preprocessing (basically lots of `.map` over objects called PCollections).

The advantage of apache beam is that you can choose which type of runner you want to use to preprocess your data. The main runners are:

- the `DirectRunner` to run the p... | https://github.com/huggingface/datasets/pull/55 | [

"Right now the changes are a bit hard to read as the one from #25 are also included. You can wait until #25 is merged before looking at the implementation details",

"Nice!! I tested it a bit and works quite well. I will do a my review once the #25 will be merged because there are several overlaps.\r\n\r\nAt least... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/55",

"html_url": "https://github.com/huggingface/datasets/pull/55",

"diff_url": "https://github.com/huggingface/datasets/pull/55.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/55.patch",

"merged_at": "2020-05-11T07:20:00"

} | 55 | true |

[Tests] Improved Error message for dummy folder structure | Improved Error message | https://github.com/huggingface/datasets/pull/54 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/54",

"html_url": "https://github.com/huggingface/datasets/pull/54",

"diff_url": "https://github.com/huggingface/datasets/pull/54.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/54.patch",

"merged_at": "2020-05-06T18:12:59"

} | 54 | true |

[Features] Typo in generate_from_dict | Change `isinstance` test in features when generating features from dict. | https://github.com/huggingface/datasets/pull/53 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/53",

"html_url": "https://github.com/huggingface/datasets/pull/53",

"diff_url": "https://github.com/huggingface/datasets/pull/53.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/53.patch",

"merged_at": "2020-05-07T15:28:45"

} | 53 | true |

allow dummy folder structure to handle dict of lists | `esnli.py` needs that extension of the dummy data testing. | https://github.com/huggingface/datasets/pull/52 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/52",

"html_url": "https://github.com/huggingface/datasets/pull/52",

"diff_url": "https://github.com/huggingface/datasets/pull/52.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/52.patch",

"merged_at": "2020-05-06T13:55:18"

} | 52 | true |

[Testing] Improved testing structure | This PR refactors the test design a bit and puts the mock download manager in the `utils` files as it is just a test helper class.

as @mariamabarham pointed out, creating a dummy folder structure can be quite hard to grasp.

This PR tries to change that to some extent.

It follows the following logic for the `dumm... | https://github.com/huggingface/datasets/pull/51 | [

"Awesome!\r\nLet's have this in the doc at the end :-)"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/51",

"html_url": "https://github.com/huggingface/datasets/pull/51",

"diff_url": "https://github.com/huggingface/datasets/pull/51.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/51.patch",

"merged_at": "2020-05-06T13:20:17"

} | 51 | true |

[Tests] test only for fast test as a default | Test only for one config on circle ci to speed up testing. Add all config test as a slow test.

@mariamabarham @thomwolf | https://github.com/huggingface/datasets/pull/50 | [

"Test failure is not related to change in test file.\r\n"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/50",

"html_url": "https://github.com/huggingface/datasets/pull/50",

"diff_url": "https://github.com/huggingface/datasets/pull/50.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/50.patch",

"merged_at": "2020-05-05T13:02:16"

} | 50 | true |

fix flatten nested | https://github.com/huggingface/datasets/pull/49 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/49",

"html_url": "https://github.com/huggingface/datasets/pull/49",

"diff_url": "https://github.com/huggingface/datasets/pull/49.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/49.patch",

"merged_at": "2020-05-05T13:59:25"

} | 49 | true | |

[Command Convert] remove tensorflow import | Remove all tensorflow import statements. | https://github.com/huggingface/datasets/pull/48 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/48",

"html_url": "https://github.com/huggingface/datasets/pull/48",

"diff_url": "https://github.com/huggingface/datasets/pull/48.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/48.patch",

"merged_at": "2020-05-05T11:13:56"

} | 48 | true |

[PyArrow Feature] fix py arrow bool | To me it seems that `bool` can only be accessed with `bool_` when looking at the pyarrow types: https://arrow.apache.org/docs/python/api/datatypes.html. | https://github.com/huggingface/datasets/pull/47 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/47",

"html_url": "https://github.com/huggingface/datasets/pull/47",

"diff_url": "https://github.com/huggingface/datasets/pull/47.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/47.patch",

"merged_at": "2020-05-05T10:40:27"

} | 47 | true |

[Features] Strip str key before dict look-up | The dataset `anli.py` currently fails because it tries to look up a key `1\n` in a dict that only has the key `1`. Added an if statement to strip key if it cannot be found in dict. | https://github.com/huggingface/datasets/pull/46 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/46",

"html_url": "https://github.com/huggingface/datasets/pull/46",

"diff_url": "https://github.com/huggingface/datasets/pull/46.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/46.patch",

"merged_at": "2020-05-05T08:37:44"

} | 46 | true |

[Load] Separate Module kwargs and builder kwargs. | Kwargs for the `load_module` fn should be passed with `module_xxxx` to `builder_kwargs` of `load` fn.

This is a follow-up PR of: https://github.com/huggingface/nlp/pull/41 | https://github.com/huggingface/datasets/pull/45 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/45",

"html_url": "https://github.com/huggingface/datasets/pull/45",

"diff_url": "https://github.com/huggingface/datasets/pull/45.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/45.patch",

"merged_at": null

} | 45 | true |

[Tests] Fix tests for datasets with no config | Forgot to fix `None` problem for datasets that have no config this in PR: https://github.com/huggingface/nlp/pull/42 | https://github.com/huggingface/datasets/pull/44 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/44",

"html_url": "https://github.com/huggingface/datasets/pull/44",

"diff_url": "https://github.com/huggingface/datasets/pull/44.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/44.patch",

"merged_at": "2020-05-04T13:28:03"

} | 44 | true |

[Checksums] If no configs exist prevent to run over empty list | `movie_rationales` e.g. has no configs. | https://github.com/huggingface/datasets/pull/43 | [

"Whoops I fixed it directly on master before checking that you have done it in this PR. We may close it",

"Yeah, I saw :-) But I think we should add this as well since some datasets have an empty list of configs and then as the code is now it would fail. \r\n\r\nIn this PR, I just make sure that the code jumps in... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/43",

"html_url": "https://github.com/huggingface/datasets/pull/43",

"diff_url": "https://github.com/huggingface/datasets/pull/43.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/43.patch",

"merged_at": null

} | 43 | true |

[Tests] allow tests for builders without config | Some dataset scripts have no configs - the tests have to be adapted for this case.

In this case the dummy data will be saved as:

- natural_questions

-> dummy

-> -> 1.0.0 (version num)

-> -> -> dummy_data.zip

| https://github.com/huggingface/datasets/pull/42 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/42",

"html_url": "https://github.com/huggingface/datasets/pull/42",

"diff_url": "https://github.com/huggingface/datasets/pull/42.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/42.patch",

"merged_at": "2020-05-04T13:10:48"

} | 42 | true |

[Load module] allow kwargs into load module | Currenly it is not possible to force a re-download of the dataset script.

This simple change allows to pass ``force_reload=True`` as ``builder_kwargs`` in the ``load.py`` function. | https://github.com/huggingface/datasets/pull/41 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/41",

"html_url": "https://github.com/huggingface/datasets/pull/41",

"diff_url": "https://github.com/huggingface/datasets/pull/41.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/41.patch",

"merged_at": "2020-05-04T19:39:06"

} | 41 | true |

Update remote checksums instead of overwrite | When the user uploads a dataset on S3, checksums are also uploaded with the `--upload_checksums` parameter.

If the user uploads the dataset in several steps, then the remote checksums file was previously overwritten. Now it's going to be updated with the new checksums. | https://github.com/huggingface/datasets/pull/40 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/40",

"html_url": "https://github.com/huggingface/datasets/pull/40",

"diff_url": "https://github.com/huggingface/datasets/pull/40.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/40.patch",

"merged_at": "2020-05-04T11:51:49"

} | 40 | true |

[Test] improve slow testing | https://github.com/huggingface/datasets/pull/39 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/39",

"html_url": "https://github.com/huggingface/datasets/pull/39",

"diff_url": "https://github.com/huggingface/datasets/pull/39.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/39.patch",

"merged_at": "2020-05-04T08:59:49"

} | 39 | true | |

[Checksums] Error for some datasets | The checksums command works very nicely for `squad`. But for `crime_and_punish` and `xnli`,

the same bug happens:

When running:

```

python nlp-cli nlp-cli test xnli --save_checksums

```

leads to:

```

File "nlp-cli", line 33, in <module>

service.run()

File "/home/patrick/python_bin/nlp/commands... | https://github.com/huggingface/datasets/issues/38 | [

"@lhoestq - could you take a look? It's not very urgent though!",

"Fixed with 06882b4\r\n\r\nNow your command works :)\r\nNote that you can also do\r\n```\r\nnlp-cli test datasets/nlp/xnli --save_checksums\r\n```\r\nSo that it will save the checksums directly in the right directory.",

"Awesome!"

] | null | 38 | false |

[Datasets ToDo-List] add datasets | ## Description

This PR acts as a dashboard to see which datasets are added to the library and work.

Cicle-ci should always be green so that we can be sure that newly added datasets are functional.

This PR should not be merged.

## Progress

**For the following datasets the test commands**:

```

RUN_SLOW... | https://github.com/huggingface/datasets/pull/37 | [

"Note:\r\n```\r\nnlp-cli test datasets/nlp/<your-dataset-folder> --save_checksums --all_configs\r\n```\r\ndirectly saves the checksums in the right place, and runs for all the dataset configurations.",

"@patrickvonplaten can you provide the add the link to the PR for the dummy data? ",

"https://github.com/huggi... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/37",

"html_url": "https://github.com/huggingface/datasets/pull/37",

"diff_url": "https://github.com/huggingface/datasets/pull/37.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/37.patch",

"merged_at": null

} | 37 | true |

Metrics - refactoring, adding support for download and distributed metrics | Refactoring metrics to have a similar loading API than the datasets and improving the import system.

# Import system

The import system has ben upgraded. There are now three types of imports allowed:

1. `library` imports (identified as "absolute imports")

```python

import seqeval

```

=> we'll test all the impor... | https://github.com/huggingface/datasets/pull/36 | [

"Ok, this one seems to be ready to merge.",

"> Really cool, I love it! I would just raise a tiny point, the distributive version of the metrics might not work properly with TF because it is a different way to do, why not to add a \"framework\" detection and raise warning when TF is used, saying something like \"n... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/36",

"html_url": "https://github.com/huggingface/datasets/pull/36",

"diff_url": "https://github.com/huggingface/datasets/pull/36.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/36.patch",

"merged_at": "2020-05-11T08:16:00"

} | 36 | true |

[Tests] fix typo | @lhoestq - currently the slow test fail with:

```

_____________________________________________________________________________________ DatasetTest.test_load_real_dataset_xnli _____________________________________________________________________________________

... | https://github.com/huggingface/datasets/pull/35 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/35",

"html_url": "https://github.com/huggingface/datasets/pull/35",

"diff_url": "https://github.com/huggingface/datasets/pull/35.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/35.patch",

"merged_at": "2020-05-03T13:24:20"

} | 35 | true |

[Tests] add slow tests | This PR adds a slow test that downloads the "real" dataset. The test is decorated as "slow" so that it will not automatically run on circle ci.

Before uploading a dataset, one should test that this test passes, manually by running

```

RUN_SLOW=1 pytest tests/test_dataset_common.py::DatasetTest::test_load_real_d... | https://github.com/huggingface/datasets/pull/34 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/34",

"html_url": "https://github.com/huggingface/datasets/pull/34",

"diff_url": "https://github.com/huggingface/datasets/pull/34.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/34.patch",

"merged_at": "2020-05-03T12:18:29"

} | 34 | true |

Big cleanup/refactoring for clean serialization | This PR cleans many base classes to re-build them as `dataclasses`. We can thus use a simple serialization workflow for `DatasetInfo`, including it's `Features` and `SplitDict` based on `dataclasses` `asdict()`.

The resulting code is a lot shorter, can be easily serialized/deserialized, dataset info are human-readab... | https://github.com/huggingface/datasets/pull/33 | [

"Great! I think when this merged, we can merge sure that Circle Ci stays happy when uploading new datasets. "

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/33",

"html_url": "https://github.com/huggingface/datasets/pull/33",

"diff_url": "https://github.com/huggingface/datasets/pull/33.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/33.patch",

"merged_at": "2020-05-03T12:17:33"

} | 33 | true |

Fix map caching notebooks | Previously, caching results with `.map()` didn't work in notebooks.

To reuse a result, `.map()` serializes the functions with `dill.dumps` and then it hashes it.

The problem is that when using `dill.dumps` to serialize a function, it also saves its origin (filename + line no.) and the origin of all the `globals` th... | https://github.com/huggingface/datasets/pull/32 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/32",

"html_url": "https://github.com/huggingface/datasets/pull/32",

"diff_url": "https://github.com/huggingface/datasets/pull/32.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/32.patch",

"merged_at": "2020-05-03T12:15:57"

} | 32 | true |

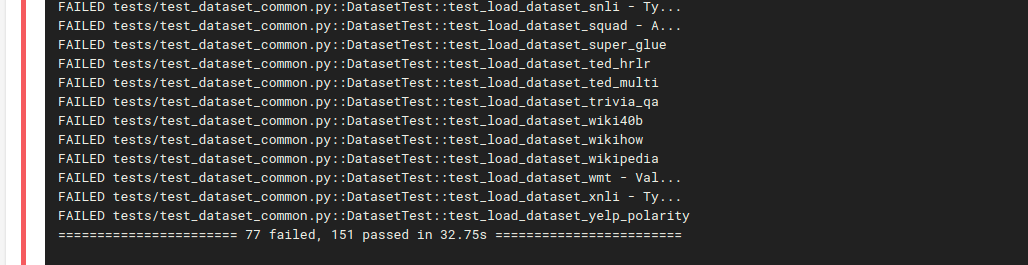

[Circle ci] Install a virtual env before running tests | Install a virtual env before running tests to not running into sudo issues when dynamically downloading files.

Same number of tests now pass / fail as on my local computer:

... | https://github.com/huggingface/datasets/pull/31 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/31",

"html_url": "https://github.com/huggingface/datasets/pull/31",

"diff_url": "https://github.com/huggingface/datasets/pull/31.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/31.patch",

"merged_at": "2020-05-01T22:06:15"

} | 31 | true |

add metrics which require download files from github | To download files from github, I copied the `load_dataset_module` and its dependencies (without the builder) in `load.py` to `metrics/metric_utils.py`. I made the following changes:

- copy the needed files in a folder`metric_name`

- delete all other files that are not needed

For metrics that require an external... | https://github.com/huggingface/datasets/pull/30 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/30",

"html_url": "https://github.com/huggingface/datasets/pull/30",

"diff_url": "https://github.com/huggingface/datasets/pull/30.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/30.patch",

"merged_at": null

} | 30 | true |

Hf_api small changes | From Patrick:

```python

from nlp import hf_api

api = hf_api.HfApi()

api.dataset_list()

```

works :-) | https://github.com/huggingface/datasets/pull/29 | [

"Ok merging! I think it's good now"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/29",

"html_url": "https://github.com/huggingface/datasets/pull/29",

"diff_url": "https://github.com/huggingface/datasets/pull/29.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/29.patch",

"merged_at": "2020-04-30T19:51:44"

} | 29 | true |

[Circle ci] Adds circle ci config | @thomwolf can you take a look and set up circle ci on:

https://app.circleci.com/projects/project-dashboard/github/huggingface

I think for `nlp` only admins can set it up, which I guess is you :-) | https://github.com/huggingface/datasets/pull/28 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/28",

"html_url": "https://github.com/huggingface/datasets/pull/28",

"diff_url": "https://github.com/huggingface/datasets/pull/28.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/28.patch",

"merged_at": "2020-04-30T19:51:08"

} | 28 | true |

[Cleanup] Removes all files in testing except test_dataset_common | As far as I know, all files in `tests` were old `tfds test files` so I removed them. We can still look them up on the other library. | https://github.com/huggingface/datasets/pull/27 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/27",

"html_url": "https://github.com/huggingface/datasets/pull/27",

"diff_url": "https://github.com/huggingface/datasets/pull/27.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/27.patch",

"merged_at": "2020-04-30T17:39:23"

} | 27 | true |

[Tests] Clean tests | the abseil testing library (https://abseil.io/docs/python/quickstart.html) is better than the one I had before, so I decided to switch to that and changed the `setup.py` config file.

Abseil has more support and a cleaner API for parametrized testing I think.

I added a list of all dataset scripts that are currentl... | https://github.com/huggingface/datasets/pull/26 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/26",

"html_url": "https://github.com/huggingface/datasets/pull/26",

"diff_url": "https://github.com/huggingface/datasets/pull/26.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/26.patch",

"merged_at": "2020-04-30T20:12:03"

} | 26 | true |

Add script csv datasets | This is a PR allowing to create datasets from local CSV files. A usage might be:

```python

import nlp

ds = nlp.load(

path="csv",

name="bbc",

dataset_files={

nlp.Split.TRAIN: ["datasets/dummy_data/csv/train.csv"],

nlp.Split.TEST: [""datasets/dummy_data/csv/test.csv""]

},

c... | https://github.com/huggingface/datasets/pull/25 | [

"Very interesting thoughts, we should think deeper about all what you raised indeed.",

"Ok here is a proposal for a more general API and workflow.\r\n\r\n# New `ArrowBasedBuilder`\r\n\r\nFor all the formats that can be directly and efficiently loaded by Arrow (CSV, JSON, Parquet, Arrow), we don't really want to h... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/25",

"html_url": "https://github.com/huggingface/datasets/pull/25",

"diff_url": "https://github.com/huggingface/datasets/pull/25.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/25.patch",

"merged_at": "2020-05-07T21:14:49"

} | 25 | true |

Add checksums | ### Checksums files

They are stored next to the dataset script in urls_checksums/checksums.txt.

They are used to check the integrity of the datasets downloaded files.

I kept the same format as tensorflow-datasets.

There is one checksums file for all configs.

### Load a dataset

When you do `load("squad")`, i... | https://github.com/huggingface/datasets/pull/24 | [

"Looks good to me :-) \r\n\r\nJust would prefer to get rid of the `_DYNAMICALLY_IMPORTED_MODULE` attribute and replace it by a `get_imported_module()` function. Maybe there is something I'm not seeing here though - what do you think? ",

"> * I'm not sure I understand the general organization of checksums. I see w... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/24",

"html_url": "https://github.com/huggingface/datasets/pull/24",

"diff_url": "https://github.com/huggingface/datasets/pull/24.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/24.patch",

"merged_at": "2020-04-30T19:52:49"

} | 24 | true |

Add metrics | This PR is a draft for adding metrics (sacrebleu and seqeval are added)

use case examples:

`import nlp`

**sacrebleu:**

```

refs = [['The dog bit the man.', 'It was not unexpected.', 'The man bit him first.'],

['The dog had bit the man.', 'No one was surprised.', 'The man had bitten the dog.']]

sys = ['... | https://github.com/huggingface/datasets/pull/23 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/23",

"html_url": "https://github.com/huggingface/datasets/pull/23",

"diff_url": "https://github.com/huggingface/datasets/pull/23.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/23.patch",

"merged_at": null

} | 23 | true |

adding bleu score code | this PR add the BLEU score metric to the lib. It can be tested by running the following code.

` from nlp.metrics import bleu

hyp1 = "It is a guide to action which ensures that the military always obeys the commands of the party"

ref1a = "It is a guide to action that ensures that the military forces always being... | https://github.com/huggingface/datasets/pull/22 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/22",

"html_url": "https://github.com/huggingface/datasets/pull/22",

"diff_url": "https://github.com/huggingface/datasets/pull/22.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/22.patch",

"merged_at": null

} | 22 | true |

Cleanup Features - Updating convert command - Fix Download manager | This PR makes a number of changes:

# Updating `Features`

Features are a complex mechanism provided in `tfds` to be able to modify a dataset on-the-fly when serializing to disk and when loading from disk.

We don't really need this because (1) it hides too much from the user and (2) our datatype can be directly ... | https://github.com/huggingface/datasets/pull/21 | [

"For conflicts, I think the mention hint \"This should be modified because it mentions ...\" is missing.",

"Looks great!"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/21",

"html_url": "https://github.com/huggingface/datasets/pull/21",

"diff_url": "https://github.com/huggingface/datasets/pull/21.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/21.patch",

"merged_at": "2020-05-01T09:29:46"

} | 21 | true |

remove boto3 and promise dependencies | With the new download manager, we don't need `promise` anymore.

I also removed `boto3` as in [this pr](https://github.com/huggingface/transformers/pull/3968) | https://github.com/huggingface/datasets/pull/20 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/20",

"html_url": "https://github.com/huggingface/datasets/pull/20",

"diff_url": "https://github.com/huggingface/datasets/pull/20.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/20.patch",

"merged_at": "2020-04-27T14:15:45"

} | 20 | true |

Replace tf.constant for TF | Replace simple tf.constant type of Tensor to tf.ragged.constant which allows to have examples of different size in a tf.data.Dataset.

Now the training works with TF. Here the same example than for the PT in collab:

```python

import tensorflow as tf

import nlp

from transformers import BertTokenizerFast, TFBertF... | https://github.com/huggingface/datasets/pull/19 | [

"Awesome!"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/19",

"html_url": "https://github.com/huggingface/datasets/pull/19",

"diff_url": "https://github.com/huggingface/datasets/pull/19.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/19.patch",

"merged_at": "2020-04-25T21:18:45"

} | 19 | true |

Updating caching mechanism - Allow dependency in dataset processing scripts - Fix style and quality in the repo | This PR has a lot of content (might be hard to review, sorry, in particular because I fixed the style in the repo at the same time).

# Style & quality:

You can now install the style and quality tools with `pip install -e .[quality]`. This will install black, the compatible version of sort and flake8.

You can then ... | https://github.com/huggingface/datasets/pull/18 | [

"LGTM"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/18",

"html_url": "https://github.com/huggingface/datasets/pull/18",

"diff_url": "https://github.com/huggingface/datasets/pull/18.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/18.patch",

"merged_at": "2020-04-28T16:06:28"

} | 18 | true |

Add Pandas as format type | As detailed in the title ^^ | https://github.com/huggingface/datasets/pull/17 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/17",

"html_url": "https://github.com/huggingface/datasets/pull/17",

"diff_url": "https://github.com/huggingface/datasets/pull/17.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/17.patch",

"merged_at": "2020-04-27T18:07:48"

} | 17 | true |

create our own DownloadManager | I tried to create our own - and way simpler - download manager, by replacing all the complicated stuff with our own `cached_path` solution.

With this implementation, I tried `dataset = nlp.load('squad')` and it seems to work fine.

For the implementation, what I did exactly:

- I copied the old download manager

- I... | https://github.com/huggingface/datasets/pull/16 | [

"Looks great to me! ",

"The new download manager is ready. I removed the old folder and I fixed a few remaining dependencies.\r\nI tested it on squad and a few others from the dataset folder and it works fine.\r\n\r\nThe only impact of these changes is that it breaks the `download_and_prepare` script that was use... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/16",

"html_url": "https://github.com/huggingface/datasets/pull/16",

"diff_url": "https://github.com/huggingface/datasets/pull/16.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/16.patch",

"merged_at": "2020-04-25T21:25:10"

} | 16 | true |

[Tests] General Test Design for all dataset scripts | The general idea is similar to how testing is done in `transformers`. There is one general `test_dataset_common.py` file which has a `DatasetTesterMixin` class. This class implements all of the logic that can be used in a generic way for all dataset classes. The idea is to keep each individual dataset test file as mini... | https://github.com/huggingface/datasets/pull/15 | [

"> I think I'm fine with this.\r\n> \r\n> The alternative would be to host a small subset of the dataset on the S3 together with the testing script. But I think having all (test file creation + actual tests) in one file is actually quite convenient.\r\n> \r\n> Good for me!\r\n> \r\n> One question though, will we ha... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/15",

"html_url": "https://github.com/huggingface/datasets/pull/15",

"diff_url": "https://github.com/huggingface/datasets/pull/15.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/15.patch",

"merged_at": "2020-04-27T14:48:02"

} | 15 | true |

[Download] Only create dir if not already exist | This was quite annoying to find out :D.

Some datasets have save in the same directory. So we should only create a new directory if it doesn't already exist. | https://github.com/huggingface/datasets/pull/14 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/14",

"html_url": "https://github.com/huggingface/datasets/pull/14",

"diff_url": "https://github.com/huggingface/datasets/pull/14.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/14.patch",

"merged_at": "2020-04-23T08:27:33"

} | 14 | true |

[Make style] | Added Makefile and applied make style to all.

make style runs the following code:

```

style:

black --line-length 119 --target-version py35 src

isort --recursive src

```

It's the same code that is run in `transformers`. | https://github.com/huggingface/datasets/pull/13 | [

"I think this can be quickly reproduced. \r\nI use `black, version 19.10b0`. \r\n\r\nWhen running: \r\n`black nlp/src/arrow_reader.py` \r\nit gives me: \r\n\r\n```\r\nerror: cannot format /home/patrick/hugging_face/nlp/src/nlp/arrow_reader.py: cannot use --safe with this file; failed to parse source file. AST erro... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/13",

"html_url": "https://github.com/huggingface/datasets/pull/13",

"diff_url": "https://github.com/huggingface/datasets/pull/13.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/13.patch",

"merged_at": "2020-04-23T13:02:22"

} | 13 | true |

[Map Function] add assert statement if map function does not return dict or None | IMO, if a function is provided that is not a print statement (-> returns variable of type `None`) or a function that updates the datasets (-> returns variable of type `dict`), then a `TypeError` should be raised.

Not sure whether you had cases in mind where the user should do something else @thomwolf , but I think ... | https://github.com/huggingface/datasets/pull/12 | [

"Also added to an assert statement that if a dict is returned by function, all values of `dicts` are `lists`",

"Wait to merge until `make style` is set in place.",

"Updated the assert statements. Played around with multiple cases and it should be good now IMO. "

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/12",

"html_url": "https://github.com/huggingface/datasets/pull/12",

"diff_url": "https://github.com/huggingface/datasets/pull/12.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/12.patch",

"merged_at": "2020-04-24T06:29:03"

} | 12 | true |

[Convert TFDS to HFDS] Extend script to also allow just converting a single file | Adds another argument to be able to convert only a single file | https://github.com/huggingface/datasets/pull/11 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/11",

"html_url": "https://github.com/huggingface/datasets/pull/11",

"diff_url": "https://github.com/huggingface/datasets/pull/11.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/11.patch",

"merged_at": "2020-04-21T20:47:00"

} | 11 | true |

Name json file "squad.json" instead of "squad.py.json" | https://github.com/huggingface/datasets/pull/10 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/10",

"html_url": "https://github.com/huggingface/datasets/pull/10",

"diff_url": "https://github.com/huggingface/datasets/pull/10.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/10.patch",

"merged_at": "2020-04-21T20:48:06"

} | 10 | true | |

[Clean up] Datasets | Clean up `nlp/datasets` folder.

As I understood, eventually the `nlp/datasets` shall not exist anymore at all.

The folder `nlp/datasets/nlp` is kept for the moment, but won't be needed in the future, since it will live on S3 (actually it already does) at: `https://s3.console.aws.amazon.com/s3/buckets/datasets.h... | https://github.com/huggingface/datasets/pull/9 | [

"Yes!"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/9",

"html_url": "https://github.com/huggingface/datasets/pull/9",

"diff_url": "https://github.com/huggingface/datasets/pull/9.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/9.patch",

"merged_at": "2020-04-21T20:49:58"

} | 9 | true |

Fix issue 6: error when the citation is missing in the DatasetInfo | https://github.com/huggingface/datasets/pull/8 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/8",

"html_url": "https://github.com/huggingface/datasets/pull/8",

"diff_url": "https://github.com/huggingface/datasets/pull/8.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/8.patch",

"merged_at": "2020-04-20T13:24:12"

} | 8 | true | |

Fix issue 5: allow empty datasets | https://github.com/huggingface/datasets/pull/7 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7",

"html_url": "https://github.com/huggingface/datasets/pull/7",

"diff_url": "https://github.com/huggingface/datasets/pull/7.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/7.patch",

"merged_at": "2020-04-20T13:23:47"

} | 7 | true | |

Error when citation is not given in the DatasetInfo | The following error is raised when the `citation` parameter is missing when we instantiate a `DatasetInfo`:

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/jplu/dev/jplu/datasets/src/nlp/info.py", line 338, in __repr__

citation_pprint = _indent('"""{}"""'.format(self.... | https://github.com/huggingface/datasets/issues/6 | [

"Yes looks good to me.\r\nNote that we may refactor quite strongly the `info.py` to make it a lot simpler (it's very complicated for basically a dictionary of info I think)",

"No, problem ^^ It might just be a temporary fix :)",

"Fixed."

] | null | 6 | false |

ValueError when a split is empty | When a split is empty either TEST, VALIDATION or TRAIN I get the following error:

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/jplu/dev/jplu/datasets/src/nlp/load.py", line 295, in load

ds = dbuilder.as_dataset(**as_dataset_kwargs)

File "/home/jplu/dev/jplu/data... | https://github.com/huggingface/datasets/issues/5 | [

"To fix this I propose to modify only the file `arrow_reader.py` with few updates. First update, the following method:\r\n```python\r\ndef _make_file_instructions_from_absolutes(\r\n name,\r\n name2len,\r\n absolute_instructions,\r\n):\r\n \"\"\"Returns the files instructions from the absolu... | null | 5 | false |

[Feature] Keep the list of labels of a dataset as metadata | It would be useful to keep the list of the labels of a dataset as metadata. Either directly in the `DatasetInfo` or in the Arrow metadata. | https://github.com/huggingface/datasets/issues/4 | [

"Yes! I see mostly two options for this:\r\n- a `Feature` approach like currently (but we might deprecate features)\r\n- wrapping in a smart way the Dictionary arrays of Arrow: https://arrow.apache.org/docs/python/data.html?highlight=dictionary%20encode#dictionary-arrays",

"I would have a preference for the secon... | null | 4 | false |

[Feature] More dataset outputs | Add the following dataset outputs:

- Spark

- Pandas | https://github.com/huggingface/datasets/issues/3 | [

"Yes!\r\n- pandas will be a one-liner in `arrow_dataset`: https://arrow.apache.org/docs/python/generated/pyarrow.Table.html#pyarrow.Table.to_pandas\r\n- for Spark I have no idea. let's investigate that at some point",

"For Spark it looks to be pretty straightforward as well https://spark.apache.org/docs/latest/sq... | null | 3 | false |

Issue to read a local dataset | Hello,

As proposed by @thomwolf, I open an issue to explain what I'm trying to do without success. What I want to do is to create and load a local dataset, the script I have done is the following:

```python

import os

import csv

import nlp

class BbcConfig(nlp.BuilderConfig):

def __init__(self, **kwarg... | https://github.com/huggingface/datasets/issues/2 | [

"My first bug report ❤️\r\nLooking into this right now!",

"Ok, there are some news, most good than bad :laughing: \r\n\r\nThe dataset script now became:\r\n```python\r\nimport csv\r\n\r\nimport nlp\r\n\r\n\r\nclass Bbc(nlp.GeneratorBasedBuilder):\r\n VERSION = nlp.Version(\"1.0.0\")\r\n\r\n def __init__(sel... | null | 2 | false |

changing nlp.bool to nlp.bool_ | https://github.com/huggingface/datasets/pull/1 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1",

"html_url": "https://github.com/huggingface/datasets/pull/1",

"diff_url": "https://github.com/huggingface/datasets/pull/1.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/1.patch",

"merged_at": "2020-04-14T12:01:40"

} | 1 | true |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.