Instructions to use Journey9ni/SpatialStack-Qwen3.5-4B with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use Journey9ni/SpatialStack-Qwen3.5-4B with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("image-text-to-text", model="Journey9ni/SpatialStack-Qwen3.5-4B") messages = [ { "role": "user", "content": [ {"type": "image", "url": "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/p-blog/candy.JPG"}, {"type": "text", "text": "What animal is on the candy?"} ] }, ] pipe(text=messages)# Load model directly from transformers import AutoProcessor, Qwen3_5ForConditionalGenerationWithGeometry processor = AutoProcessor.from_pretrained("Journey9ni/SpatialStack-Qwen3.5-4B") model = Qwen3_5ForConditionalGenerationWithGeometry.from_pretrained("Journey9ni/SpatialStack-Qwen3.5-4B") messages = [ { "role": "user", "content": [ {"type": "image", "url": "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/p-blog/candy.JPG"}, {"type": "text", "text": "What animal is on the candy?"} ] }, ] inputs = processor.apply_chat_template( messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt", ).to(model.device) outputs = model.generate(**inputs, max_new_tokens=40) print(processor.decode(outputs[0][inputs["input_ids"].shape[-1]:])) - Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use Journey9ni/SpatialStack-Qwen3.5-4B with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "Journey9ni/SpatialStack-Qwen3.5-4B" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "Journey9ni/SpatialStack-Qwen3.5-4B", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }'Use Docker

docker model run hf.co/Journey9ni/SpatialStack-Qwen3.5-4B

- SGLang

How to use Journey9ni/SpatialStack-Qwen3.5-4B with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "Journey9ni/SpatialStack-Qwen3.5-4B" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "Journey9ni/SpatialStack-Qwen3.5-4B", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "Journey9ni/SpatialStack-Qwen3.5-4B" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "Journey9ni/SpatialStack-Qwen3.5-4B", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }' - Docker Model Runner

How to use Journey9ni/SpatialStack-Qwen3.5-4B with Docker Model Runner:

docker model run hf.co/Journey9ni/SpatialStack-Qwen3.5-4B

📋 Overview

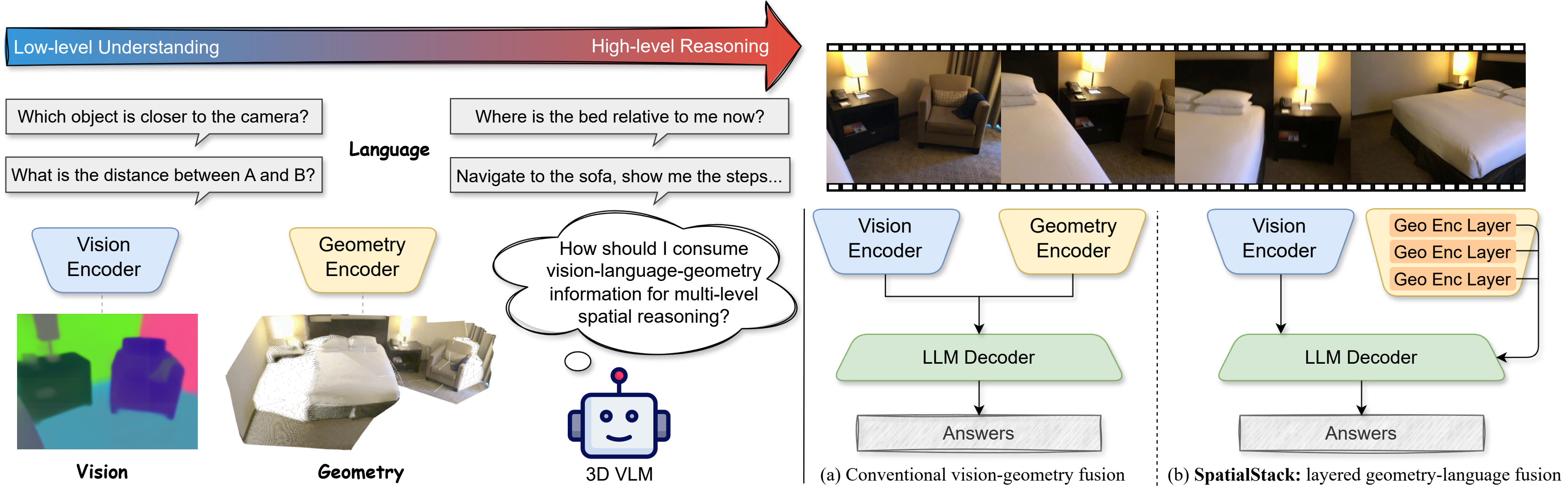

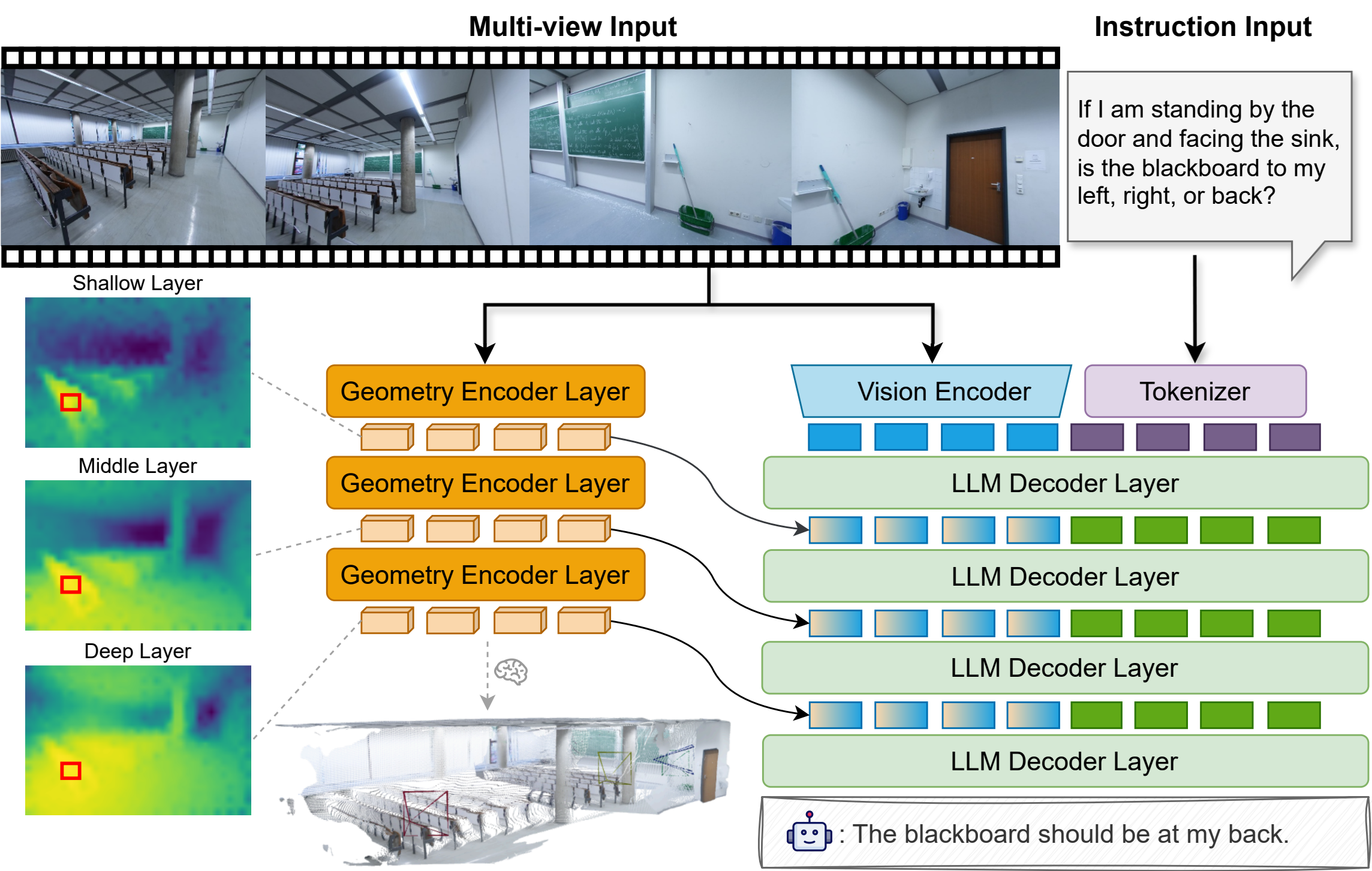

SpatialStack-Qwen3.5-4B is a geometry-augmented vision-language model designed for 3D spatial reasoning. It extends Qwen3.5-4B with a parallel VGGT-1B geometry stream, using a novel layered geometry-language fusion mechanism that progressively aligns multi-level geometric and language features across model layers.

Geometry features from encoder layers [11, 17, 23] are projected and injected into decoder layers [0, 1, 2], preserving both fine local structure and higher-level spatial context.

🏗️ Architecture

| Component | Detail |

| Base Model | Qwen/Qwen3.5-4B |

| Geometry Encoder | facebook/VGGT-1B |

| Encoder Layers | [11, 17, 23] |

| Fusion Layers | [0, 1, 2] |

| Fusion Method | DeepStack Language-Add |

| Geometry Merger | MLP |

| Precision | bfloat16 |

📊 Benchmark Results

| Benchmark | Metric | Score |

|---|---|---|

| VSI-Bench | Average | 67.5 |

| CV-Bench | Average | 85.5 |

| CV-Bench | 3D | 92.2 |

Results from the SpatialStack project page and paper.

🚀 Quick Start

Installation

git clone https://github.com/jzh15/SpatialStack.git

cd SpatialStack

pip install -e . --no-deps

For full environment setup (PyTorch, flash_attn, Qwen3.5 dependencies), see the repo README.

Single-Image Inference

python scripts/inference/infer.py \

--model-path Journey9ni/SpatialStack-Qwen3.5-4B \

--image assets/sofas.jpg \

--prompt "Describe this scene in a few complete sentences." \

--disable-thinking \

--max-new-tokens 128

VSI-Bench Evaluation

MODEL_PATH=Journey9ni/SpatialStack-Qwen3.5-4B \

MODEL_IMPL=qwen3_5 \

MODEL_ARGS_BASE="pretrained=Journey9ni/SpatialStack-Qwen3.5-4B,use_flash_attention_2=true,max_num_frames=32,max_length=12800,geometry_encoder_path=facebook/VGGT-1B,disable_thinking=true" \

OUTPUT_ROOT=logs/eval/spatialstack_qwen35_4b \

BENCHMARKS="vsibench" \

bash scripts/evaluation/eval.sh

⚠️ Limitations

- Requires a separate geometry encoder (VGGT-1B) alongside the vision-language backbone.

- Optimized for spatial reasoning benchmarks; not intended for general-purpose multimodal chat.

- Not validated for safety-critical use, robotics deployment, or real-world decision making.

📝 Citation

@article{zhang2026spatialstack,

title={SpatialStack: Layered Geometry-Language Fusion for 3D VLM Spatial Reasoning},

author={Zhang, Jiang and Zhou, Shijie and Liu, Bangya and Kadambi, Achuta and Fan, Zhiwen},

journal={arXiv preprint arXiv:2603.27437},

year={2026}

}

- Downloads last month

- 357

Model tree for Journey9ni/SpatialStack-Qwen3.5-4B

Paper for Journey9ni/SpatialStack-Qwen3.5-4B

Evaluation results

- Average on VSI-Benchself-reported67.500

- Average on CV-Benchself-reported85.500

- 3D on CV-Benchself-reported92.200